Understanding Science

It's even harder than you might think it is.

I say this in “Modeling Is Not Science”:

In large, complex, and dynamic systems (e.g., war, economy, climate) there is much uncertainty about the relevant parameters, about how to characterize their interactions mathematically, and about their numerical values.

… Consider, for example, a simple model with only 10 parameters. Even if such a model doesn’t omit crucial parameters or mischaracterize their interactions, its results must be taken with large doses of salt. Simple mathematics tells the cautionary tale: An error of about 12 percent in the value of each parameter can produce a result that is off by a factor of 3 (a hemibel); An error of about 25 percent in the value of each parameter can produce a result that is off by a factor of 10. (Remember, this is a model of a relatively small system.)

If you think that models and “data” about such things as macroeconomic activity and climatic conditions cannot be as inaccurate as that, you have no idea how such models are devised or how such data are collected and reported. It would be kind to say that such models are incomplete, inaccurate guesswork. It would be fair to say that all too many of them reflect their developers’ biases.

That’s based on a paper that I wrote in 1981.

Now comes “Observing Many Researchers Using the Same Data and Hypothesis Reveals a Hidden Universe of Idiosyncratic Uncertainty” (Proceedings of the National Academy of Sciences, preprint version dated August 10, 2022). Here is the abstract:

This study explores how researchers’ analytical choices affect the reliability of scientific findings. Most discussions of reliability problems in science focus on systematic biases. We broaden the lens to include conscious and unconscious decisions that researchers make during data analysis and that may lead to diverging results. We coordinated 161 researchers in 73 research teams and observed their research decisions as they used the same data [emphasis added] to independently test the same prominent social science hypothesis: that greater immigration reduces support for social policies among the public. In this typical case of research based on secondary data, we find that research teams reported both widely diverging numerical findings and substantive conclusions despite identical start conditions. Researchers’ expertise, prior beliefs, and expectations barely predict the wide variation in research outcomes. More than 90% of the total variance in numerical results remains unexplained even after accounting for research decisions identified via qualitative coding of each team’s workflow. This reveals a universe of uncertainty that is otherwise hidden when considering a single study in isolation. The idiosyncratic nature of how researchers’ results and conclusions varied is a new explanation for why many scientific hypotheses remain contested. These results call for epistemic humility and clarity in reporting scientific findings.

Later:

The scientific process confronts researchers with a multiplicity of seemingly minor, yet nontrivial, decision points, each of which may introduce variability in research outcomes. An important but underappreciated fact is that this even holds for what is often seen as the most objective step in the research process: working with the data after it has come in. Researchers can take literally millions of different paths in wrangling, analyzing, presenting, and interpreting their data. The number of choices grows exponentially with the number of cases and variables included….

A bias-focused perspective implicitly assumes that reducing ‘perverse’ incentives to generate surprising and sleek results would instead lead researchers to generate valid conclusions. This may be too optimistic. While removing these barriers leads researchers away from systematically taking invalid or biased analytical paths … , this alone does not guarantee validity and reliability. For reasons less nefarious, researchers can disperse in different directions in what Gelman and Loken call a ‘garden of forking paths’ in analytical decision-making….

There are two primary explanations for variation in forking decisions. The competency hypothesis posits that researchers may make different analytical decisions because of varying levels of statistical and subject expertise that leads to different judgments as to what constitutes the ‘ideal’ analysis in a given research situation. The confirmation bias hypothesis holds that researchers may make reliably different analytical choices because of differences in preexisting beliefs and attitudes, which may lead to justification of analytical approaches favoring certain outcomes post hoc. However, many other covert influences, large and small, may also lead to unreliable - and thus unexplainable idiosyncratic - variation in analytical decision pathways…. Crucially, even when distinct pathways appear equally reasonable to outsiders, seemingly minor variations between them may add up and interact to produce widely varying outcomes.

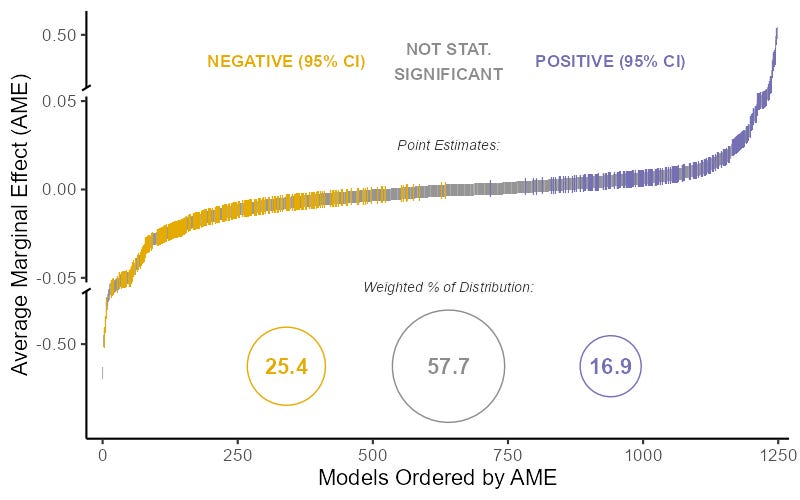

How much variation was there in the results reported by 71 research teams (2 of the original 73 dropped out)? This much:

Fig. 1 [below] visualizes the substantial variation of numerical results reported by 71 researcher teams who analyzed the same data. Results are diffuse: Little more than half the reported estimates were statistically not significantly different from zero at 95% CI, while a quarter were significantly different and negative, and 16.9 percent were statistically significant and positive.

In summary:

In a model that accounts for more than a few variables, uncertainty about the values of those variables yields a wide range of possible results, even when the relationships among the variables are well-known and modeled rigorously.

Even when there is certainty about the values of variables, the results of modeling will vary widely according to the assumptions and unconscious biases of the modelers.

Anyone who relies on global climate models — with their many inadequately measured variables and many inadequately accounted-for variables (e.g., cloud formation) — to predict future “global” temperatures is either a fool or a charlatan. He is not a scientist. Nor is he a believer in “science” because he doesn’t know what it is.

Many thanks for it. One of my research topics with some students from Med school is how to improve clinical reasoning by making explicit the structure of material inferences, as well as their abductions, and the type of mistake you point (as well as its causes) are pretty clear in CR. Thanks once again.